English

Paperback

₹1877

₹1899

1.16% OFF

(All inclusive*)

Delivery Options

Please enter pincode to check delivery time.

*COD & Shipping Charges may apply on certain items.

Review final details at checkout.

Looking to place a bulk order? SUBMIT DETAILS

About The Book

Description

Author

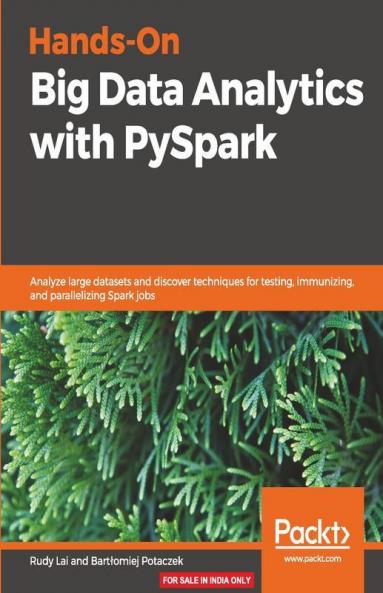

Use PySpark to easily crush messy data at-scale and discover proven techniques to create testable immutable and easily parallelizable Spark jobsKey FeaturesWork with large amounts of agile data using distributed datasets and in-memory cachingSource data from all popular data hosting platforms such as HDFS Hive JSON and S3Employ the easy-to-use PySpark API to deploy big data Analytics for productionBook DescriptionApache Spark is an open source parallel-processing framework that has been around for quite some time now. One of the many uses of Apache Spark is for data analytics applications across clustered computers. In this book you will not only learn how to use Spark and the Python API to create high-performance analytics with big data but also discover techniques for testing immunizing and parallelizing Spark jobs.You will learn how to source data from all popular data hosting platforms including HDFS Hive JSON and S3 and deal with large datasets with PySpark to gain practical big data experience. This book will help you work on prototypes on local machines and subsequently go on to handle messy data in production and at scale. This book covers installing and setting up PySpark RDD operations big data cleaning and wrangling and aggregating and summarizing data into useful reports. You will also learn how to implement some practical and proven techniques to improve certain aspects of programming and administration in Apache Spark.By the end of the book you will be able to build big data analytical solutions using the various PySpark offerings and also optimize them effectively.What you will learnGet practical big data experience while working on messy datasetsAnalyze patterns with Spark SQL to improve your business intelligenceUse PySpark's interactive shell to speed up development timeCreate highly concurrent Spark programs by leveraging immutabilityDiscover ways to avoid the most expensive operation in the Spark API: the shuffle operationRe-design your jobs to use reduceByKey instead of groupByCreate robust processing pipelines by testing Apache Spark jobsWho this book is forThis book is for developers data scientists business analysts or anyone who needs to reliably analyze large amounts of large-scale real-world data. Whether you're tasked with creating your company's business intelligence function or creating great data platforms for your machine learning models or are looking to use code to magnify the impact of your business this book is for you. About the Author Rudy Lai is the founder of QuantCopy a sales acceleration start-up using AI to write sales emails to prospective customers. Prior to founding QuantCopy Rudy ran HighDimension.IO a machine learning consultancy where he experienced first hand the frustrations of outbound sales and prospecting. Rudy has also spent more than 5 years in quantitative trading at leading investment banks such as Morgan Stanley. This valuable experience allowed him to witness the power of data but also the pitfalls of automation using data science and machine learning. He holds a computer science degree from Imperial College London where he was part of the Dean's list and received awards including the Deutsche Bank Artificial Intelligence prize. Colibri Digital is a technology consultancy company founded in 2015 by James Cross and Ingrid Funie. The company works to help its clients navigate the rapidly changing and complex world of emerging technologies with deep expertise in areas such as big data data science machine learning and cloud computing. Over the past few years they have worked with some of the world's largest and most prestigious companies including a tier 1 investment bank a leading management consultancy group and one of the world's most popular soft drinks companies helping each of them to better make sense of their data and process it in more intelligent ways. The company

Delivery Options

Please enter pincode to check delivery time.

*COD & Shipping Charges may apply on certain items.

Review final details at checkout.

Details

ISBN 13

9781838644130

Publication Date

-29-03-2019

Pages

-182

Weight

-329 grams

Dimensions

-191x235x9.95 mm